Weeldi Wins Innovation of the Year Award at the ETMA - Enterprise Technology Management Conference4/15/2024

Innovation of the Year

SAN FRANCISCO, CA April 15, 2024 - Weeldi is proud to announce that it has been awarded the Innovation of the Year award at the ETMA - Enterprise Technology Management Association conference held in Puerto Rico this past week. This recognition celebrates Weeldi's groundbreaking Web Automation Studio and its profound impact on the Enterprise Technology Management sector. Weeldi's Web Automation Studio garnered attention from industry leaders for its transformative capabilities in simplifying stable, scalable web automation for enterprises. This award underscores Weeldi's dedication to addressing the automation gap prevalent in the Enterprise Technology Management industry, where accessing and utilizing vendor APIs can be challenging. The Weeldi Automation Studio represents a significant advancement in web automation technology. It empowers enterprises to create and manage stable, scalable automations with No Coding required. This user-friendly approach makes complex tasks more accessible to all users, irrespective of their expertise level. "We are honored to receive the Innovation of the Year award at the ETMA conference," said Mathieu Guilmineau, Co-Founder and CTO at Weeldi. "This recognition reaffirms our commitment to innovation in web automation and making it accessible to all users. We would like to thank ETMA and industry leaders for this very special award.” With this recognition, Weeldi remains dedicated to its mission of innovation and empowerment. The company aims to continue its efforts in making web automation stable, scalable, and user-friendly, enabling all users to automate their most time-consuming and tedious tasks on the web. About Weeldi Headquartered in the San Francisco Bay Area Weeldi enables companies of any size or technical ability to stably automate processes on the web, at scale, through its web service API or user interface — with no coding required. 10 reasons web automation is essential for expense management apps .

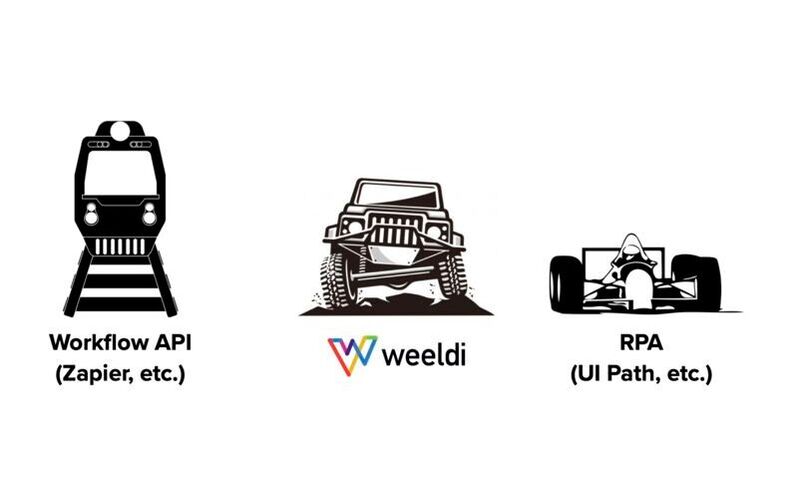

Web Automation for Expense Management Applications The effectiveness of an expense management application relies heavily on the quality of the data driving it, and the standard of data in expense management is determined and gauged through 3 crucial factors: accuracy, freshness, and breadth. Below are 10 reasons why web automation is essential for providers of expense management apps. 1. Data isn’t always available via API In the realm of expense management apps, crucial usage and expense data points are not always accessible through APIs. Instead, this data is often scattered across multiple customer-facing vendor web portals or applications. Web automation is essential to bridge this gap by enabling the seamless extraction of vital data from these non-API data sources. 2. Security and efficiency While employing low-cost labor for collecting customer data from various vendor web portals and applications is an option, it's important to consider that finding and retaining such labor is becoming increasingly challenging and can pose difficulties in managing security risks. Automating these tasks not only enhances the overall quality of your expense management application's performance but also contributes to increased profit margins in the long term and improved security. 3. Increase Net Revenue Retention (NRR). In today’s modern age of SaaS, customers expect a broad and seamless flow of data into their applications. Eliminating manual data-loading processes for your customers not only enhances product adoption but also fosters account growth and lowers churn. Conversely, relying on customers to load critical data themselves often results in tasks being overlooked, leading to missed value. Customers who do not realize the full value typically do not experience growth and may eventually churn. 4. The long tail Customers expect their expense management application providers to oversee all relevant category spending. Web automation empowers you to address a wide array of vendors, extending support beyond the mainstream. This capability to handle the "long tail" of vendors ensures that you remain both versatile and comprehensive in meeting customer needs. 5. Differentiation As an expense management application category matures, distinguishing oneself from competitors becomes increasingly challenging for providers in the category. Web automation offers the agility to access unique data sets, facilitating the development of innovative and distinctive new features. This positive differentiation can help your application stand out above the competition and lead to more head-to-head wins. 6. Speed to ROI is king Prudent customers buy with a specific ROI timeline in mind. The quicker you can ingest your customers' data into your expense management app, the sooner you can showcase an ROI. Utilizing web automation allows you to retrieve and process data from the most accessible entry points—namely, the vendor web portals and application user interfaces that customers already use regularly—without incurring API costs or encountering roadblocks. This capability significantly expedites deployments by facilitating swift integration. 7. Vendors are aligned with their customers and revenue Just like you, vendors prioritize enhancing their offerings to boost revenue. Allocating valuable resources to construct useful APIs that facilitate access to expense data, a move that might initially diminish their revenue, typically remains a perpetual lower priority. Consequently, usage and expense data APIs are often either unavailable, challenging to access, incomplete, or unreliable. 8. Scalability As your expense management application business grows, having a scalable solution becomes critical. Web automation enables expense management applications to scale efficiently by handling the growing frequency and overall volumes of data acquisition. This ensures that your expense management application maintains a quality user experience as you grow, without encountering roadblocks relating to labor quality and costs, or vendor API costs, changes, or limits. 9. Predictability and accuracy equate to credibility Automating repetitive data collection tasks ensures a predictable flow of data and significantly minimizes the risk of errors. This predictability and accuracy are paramount for establishing credibility in expense management applications. 10. Traditional tools don’t scale well Modern web automation surpasses the limitations of traditional homegrown scripting and Robotic Process Automation (RPA) tools. Unlike rigid scripting languages and intricate RPA setups, web automation provides a truly ready-to-use-out-of-the-box, intuitive approach to website automation. This approach empowers non-developers to both create and maintain automations quickly and efficiently. Why Weeldi While APIs are the preferred means of transferring data from vendors to expense management applications, they are not always available, accessible, or reliable. Solutions like RPA, promoted to address these gaps, often involve expensive and complex configurations, sometimes even requiring custom development for an enterprise-ready web automation solution. Alternatively, choosing purpose-built solutions for web automation could save both time and money. Companies like Weeldi, for instance, provide web automation out-of-the-box, eliminating the need for costly configurations or coding. Additionally, you pay based on results, not per bot-hour. Weeldi's renewed PCI Compliance reaffirms its position as the premier, trusted No-Code website automation solution for payment and procurement processes involving credit card data.

San Francisco, CA — Weeldi, the premier no-code solution for automating website tasks, is proud to announce the successful renewal of its PCI DSS Level 1 Certification. This achievement reaffirms Weeldi's position as the industry's first fully cloud-based, no-code website automation platform to attain this certification. In a landscape where companies and application providers strive to streamline labor-intensive payment and procurement processes involving a multitude of global vendors without the necessary APIs for automated electronic payments, Weeldi stands as a gateway to limitless PCI Compliant payment automation possibilities. It empowers non-developers to construct robust, scalable payment automations in mere minutes. Since its inception, Weeldi has been a game-changer, enabling customers to automate tasks on websites that have stymied traditional RPA tools, web drivers, browser automation tools and in-house solutions. Its out-of-the-box capabilities extend to automating beyond logins, past OTP (One-time Passwords), navigating around bot deterrents, handling dynamic websites, overcoming CAPTCHAs, working with shadow DOM, and adapting to website downtime, among a growing list of other website automation challenges. Moe Arnaiz, Co-Founder and CEO of Weeldi, expresses the company's enthusiasm about the renewed PCI Level 1 Certification, stating, "This certification represents the highest level of assurance for a service provider, enabling us to continue our growth as a trusted solution for stable payment and procurement automation." Arnaiz continues, "Globally, there are numerous vendors spanning industries like telecommunications, utilities, technology (e.g. SaaS and cloud), shipping, and more, who lack accessible APIs or electronic methods to support the payment and procurement automation demanded by their end customers. With just a few additional minutes invested, these customers can harness our PCI Compliant website automation solution to securely automate these processes directly on the same customer-facing websites they presently navigate manually." PCI DSS (Payment Card Industry Data Security Standard) comprises a set of security standards developed by the credit card industry to safeguard payment systems against data breaches. Service providers handling over 300,000 transactions annually are obligated to adhere to the rigorous PCI Level 1 requirements. Weeldi's attainment of PCI Level 1 Service Provider Certification follows its achievement of SOC 2 Type II attestation in August 2023. About Weeldi Headquartered in the San Francisco Bay Area Weeldi enables companies of any size or technical ability to stably automate processes on websites, at scale, through its web service API or user interface — with no coding required. SAN FRANCISCO, CA — August 4, 2023: Weeldi, the easiest way to automate any task you do on a website w/ no coding required, announced that it has successfully completed the System and Organization Controls (SOC) 2 Type 2 audit in accordance with attestation standards established by the American Institute of Certified Public Accountants (AICPA). Conducted by Prescient Security, a global top 20 independent audit and penetration testing company, and streamlined by Secureframe the premier automated compliance platform, the attestation affirms that Weeldi’s information security practices, policies, procedures, and operations meet the SOC 2 Type 2 standards for security, availability, and confidentiality. As companies use outside vendors to perform activities that are core to their business operations and strategy, there is a need for more trust and transparency into cloud service providers’ operations, processes, and results. Weeldi’s SOC 2 Type 2 report verifies the continuance of internal controls which have been designed and implemented to meet the requirements for the security principles set forth in the Trust Services Principles and Criteria for Security. It provides a thorough review of how Weeldi’s internal controls affect the security, availability, and processing integrity of the systems it uses to process customers’ data, and the confidentiality and privacy of the information processed by these systems. This independent validation of security controls is crucial for customers. “Completing another SOC 2 Type 2 attestation reinforces Weeldi’s consistent commitment to the security, availability, and processing integrity of Weeldi’s no-code automation platform, while automating many of the compliance processes with Secureframe ensures consistent and active management, and improved near real-time visibility into our compliance health,” says Mathieu Guilmineau, Co-Founder & CTO of Weeldi. “Our customers can feel confident that we are taking security seriously at Weeldi as we continue to invest in the best tools available to improve our security and compliance processes.” In addition to a SOC 2 Type 2 attestation, Weeldi is a PCI DSS Certified Level 1 Service Provider through The Payment Card Industry Security Standards Council and continues to make enhancements to its infrastructure by adding additional layers of redundancy and increasing monitoring coverage of its platform, while ensuring it remains the easiest and most stable way to automate any process customers do on a website. About Weeldi Headquartered in the San Francisco Bay Area Weeldi enables companies of any size or technical ability to stably automate processes on websites, at scale, through its web service API or user interface — with no coding required. 10 key considerations for successful RPA-based transaction automation on websites Traditional RPA for transaction automation on websites

Traditional RPA bot platforms are powerful tools, however, if you are attempting to use them for transaction automation on websites you will need to address the following items in order to be successful at scale. 1. Process Engineering Successful automation starts with a clearly defined and touchless process. The process should be well-defined, with identified variables and a clear understanding of their impact on subsequent steps. If the process lacks predictability or organization, it may be necessary to narrow the scope of automation or further prepare the process before automation can be implemented. This eliminates the need for individual heroics and ensures a smoother automation journey. 2. Data Quality Accurate and reliable transaction automation relies on high-quality data. To ensure successful automation, it is crucial to maintain clean, structured, and accessible data that aligns with the website where automation will take place. This includes storing and organizing relevant information such as login credentials, payment details, and product or service specifications. For instance, if the website stores the SKU for an "iPhone 14 128GB Black" as separate fields with slightly different naming conventions, it is important for your data to reflect this format accurately. This alignment is necessary because automation does not involve human interpretation and relies solely on the provided data. Additionally, it is good practice to separate the data transformation layer from the automation steps to simplify maintenance. This way, you can distinguish between automation and data related issues if any failures occur. 3. Point-of-No-Return (PoNR) When automating transactions on websites, the stability and uptime of the website are beyond your control, making it unpredictable. In such cases, it often requires multiple attempts for an automation to complete successfully. Therefore, it is crucial to incorporate configurable reattempt logic to ensure scalable automation on websites. However, while implementing reattempt logic, it is essential to also prevent unintended transactions that could result in accidental orders or payments. To mitigate this risk, each transaction automation should include a Point-of-No-Return (PoNR) defined within the automation process. The PoNR specifies a specific step where reattempts should no longer be made. For common transactions like payments or product/service orders, the PoNR is typically set at the Submit button. This ensures that once the transaction reaches this point, further reattempts are avoided, minimizing the possibility of unintended actions. By incorporating a well-defined PoNR into your transaction automation process, you can strike a balance between successful reattempts and preventing unintentional transactions, ensuring the reliability and accuracy of your automation efforts. 4. Clearing Your Cart When automating transactions that involve ordering or modifying products or services, it is important to consider the session memorization behavior of the website where the automation will take place. For instance, many eCommerce websites store shopping carts from previous sessions but not from concurrent sessions. This means that if a user logs in manually or if another automation stopped before completing an order, there may be leftover items in the shopping cart associated with that data source. Furthermore, it's worth noting that when multiple automations are running concurrently, shopping carts typically do not automatically update each other. This characteristic can be leveraged to run automations concurrently, allowing for increased scalability. To ensure a smooth transaction process and avoid unexpected order placements, it is recommended to implement a step at the beginning of each transaction automation for product or service orders, where the shopping cart is cleared. This step helps eliminate any potential remnants from previous sessions or automations, providing a clean starting point for the automation. 5. Security and Compliance Make sure to select an automation tool that prioritizes security and compliance by adhering to frameworks such as PCI DSS and SOC2. Additionally, ensure that the chosen tool offers the flexibility to manage sensitive information like personally identifiable information (PII), financial data, or credentials by integrating with internal or third-party vaults such as AWS Secrets, Basis Theory or 1Password. 6. Build a Failure First Test your automation by incorporating deliberate failures, such as using test credit card numbers or intentionally leaving fields blank. This approach allows thorough testing without executing transactions during the process. 7. Handling Website Changes When automating on websites, it is crucial to be prepared for and effectively manage changes that frequently occur. Websites often have dynamic page layouts that can be influenced by alerts, advertisements, and pop-ups. The underlying structure of the website, represented by the Document Object Model (DOM), is constantly evolving, leading to changes in element tags and potentially inconsistent naming conventions. Additionally, the positions of items on a webpage may change over time, making reliance on XY coordinates inadequate for accurate targeting. To ensure stable and reliable website automation without overwhelming your resources, it is essential to utilize a solution that can automatically adapt to these changes. This solution should be capable of identifying and addressing modifications in the website's structure and layout. By doing so, it can efficiently surface only the relevant changes to human operators who then can exercise their judgment. 8. Bot Deterrents Take into account the bot-blocking measures deployed by Content Delivery Networks (CDNs) and other security mechanisms. These measures are activated when a website access pattern indicates the presence of a bot, such as excessive speed, frequent requests, concurrent access, usage of non-trusted IP addresses, headless browsers, and other factors. It is crucial to find a solution that can adapt to these constantly evolving bot deterrents enabling you to avoid them. 9. Captchas and MFA Websites often enforce CAPTCHA and/or multi-factor authentication (MFA). CAPTCHA has become increasingly complex, sometimes even invisible to humans. MFA steps, such as receiving OTPs via email, text, or authenticator apps, can be triggered unpredictably. To overcome these potential blockers, it is essential to find a solution that can effectively solve CAPTCHA, avoid MFA challenges, and adapt to the evolving nature of both. 10. Infrastructure and Scalability To scale your transaction automation, consider migrating to the cloud. Allocate DevOps resources for deploying, managing, and scaling your automation bots. Conduct thorough testing to ensure infrastructure compatibility and security, mitigating the risk of unintended transactions. Additionally, ensure that your automation solution offers APIs, enabling you to automate transaction management at scale. Why Weeldi Traditional RPA platforms require complex configuration and custom development for stable and scalable transaction automation on websites. In contrast, purpose-built solutions like Weeldi offer out-of-the-box solutions for these considerations and more, saving time and costs. With Weeldi, there is no need for extensive configuration or coding, making it a cost-effective choice for enterprise-ready website automation. Presented at the ETMA Conference 2022 in Nashville, TN - 10/05/2022 This is an update to a post originally made in February 2020.

11 obstacles you will encounter using traditional RPA (Robotic Process Automation) bot platforms for process automation on websites RPA on websites Traditional RPA bot platforms are powerful tools, however, if you are attempting to use them for automation on websites you will need to ensure you have solutions for the following 11 obstacles. 1. Logins The processes you want to automate may be on the Deep Web (i.e. behind login credentials). To automate these processes you’ll need to ensure your solution can securely store and/or access your credentials. This means both vetting the security posture of your bot automation platform and supporting integrations to 3rd party credentials vaults like Basis Theory, 1 Password, Amazon Secrets, and others. In addition, credentials change and may not always be updated in your vault or bot automation platform, which means your bots must be smart enough to recognize legitimate invalid credentials and not only surface visibility to your operations personnel for action, but also ensure that your bots don’t inadvertently lock logins by reattempting invalid credentials. 2. MFA - Multi-Factor Authentication Websites may require MFA when logging in from a new or unrecognized web browser. In addition, this step can be triggered at the discretion of a website at any time. You’ll need a solution to securely minimize, keep up with and resolve various types of MFA including OTP (One Time Passcode) to email, text, or authenticator apps. 3. CAPTCHA Websites may require you to solve CAPTCHA before proceeding with your automation. You’ll need a solution to solve, avoid and keep up with various types of CAPTCHA, which are constantly evolving to be ever more intricate and even invisible to the human eye. 4. Bot Deterrents CDNs - Content Delivery Networks More and more Websites deploy CDN’s (e.g. Cloudflare, Akamai, etc.) which may block bots intentionally to minimize bot traffic or unintentionally through their efforts to prevent DDOS (Distributed Denial of Service) attacks and improve general website performance. These deterrents are triggered by accessing a website in a way that distinguishes a bot from a human such as too fast, too frequently, concurrently, from non-trusted IP addresses, while using headless browsers, and various other reasons. You’ll need a solution to avoid and adjust to constantly evolving bot deterrents. 5. Website Changes When you automate on websites you’ll need to be prepared to manage and adapt to change. Page layouts are rarely static due to alerts, ads, and popups. Website DOMs (Document Object Models) are constantly evolving with element tags regularly changed or inconsistently named. Items on webpages may change their position rendering XY coordinates as a sole method for locating targets insufficient. To automate stably on websites, without overwhelming your resources, you’ll need a solution that can automatically solve for these changes and only surface the changes to operations that require human judgment. 6. Infrastructure As your automation efforts gain traction, eventually you’ll need to scale, which means you’ll need to move your bots to the cloud. This will require DevOps time and expertise to deploy, manage and scale your bots in the cloud. You will also need to adjust your bots to work with the various nuanced configurations, security, and monitoring differences you’ll experience running automations in the cloud. 7. Scheduling, Intermittent Downtime, Time-Outs, and Latency You’ll need a flexible solution to schedule the frequency of bot attempts and reattempts (for failed) unattended automations. Reattempts are particularly important with automation on websites, as websites may experience temporary downtime or slow page loading, which can cause bots to fail. Reattempt scheduling needs to be built into your bots and configurable to minimize manual intervention and maximize the efficiency of your automations. You’ll need a solution that can handle this detail and flexibility for scheduling. 8. New Front End Frameworks and Web Widgets As the web evolves so do the frontend frameworks and web components. Your bots should be able to run in any framework such as AngularJS and ReactJS and compatible with a broad array of web components such as modals, hidden fields, shadow DOM, and sophisticated navigation and search components. In addition, your bots should evolve to account for modern web development practices. With traditional RPA, this may mean building new bots or rebuilding your existing bots. 9. A/B Testing Websites can have multiple versions depending on region, customer type, and A/B testing. To adequately support this common scenario you will have to build and support multiple versions of your bot and ensure your automation tool is intelligent enough to timely identify which version of the Bot should be used for each Job. 10. Rogue Scripts, Insecure Connections, and Mixed Content Some websites include rogue scripts or libraries, which will trigger timeouts, and others insecure connections or content, which result in modern web browsers rejecting all or part of the page. You’ll need a solution to manage these obstacles. 11. Compliance The processes you want to automate may require or access sensitive information like PII (personally identifiable information) or financial data (e.g. credit card, banking information, etc.). This means you must validate that your solution is both secure and within compliance for your automation use case. (e.g. PCI DSS, GDPR, SOC2 Type II, etc.). Why Weeldi While traditional RPA platforms can be powerful tools, they often require costly, intricate configuration and even custom development to deliver an enterprise-ready solution for stable, scalable process automation on websites. Alternatively, considering purpose-built solutions for this use case could save you time and money. As an example, companies like Weeldi provide solutions to these 11 obstacles and many others, out-of-the-box, with no costly configuration and with no coding required. San Francisco, CA — Weeldi, the easiest way to automate any task you do on a website w/ no coding required, announced today that it has successfully received PCI DSS Level 1 Certification, making it the first no-code, fully cloud-based website automation solution to receive this designation.

As companies and application providers look to automate tedious manual payment with thousands of vendors worldwide who lack the APIs for automated electronic payments, Weeldi opens up endless PCI Compliant payment automation possibilities by enabling non-developers to build stable, scalable payment automations in just minutes. From day one, Weeldi has helped customers automate on websites where traditional RPA tools, web drivers, and homegrown solutions struggle to deliver a stable and scalable automation experience, including in areas like behind logins, past OTP (One-time Passwords), through bot deterrents, on dynamic websites, around CAPTCHAs, with shadow DOM, between website downtime and a growing list of other hurdles. “We are very excited and proud to receive our PCI Level 1 Certification. This is the highest level of assurance a service provider can receive and it unlocks huge opportunities for us in the payment and procurement automation space,” says Moe Arnaiz, Co-Founder and CEO of Weeldi. “There are thousands of vendors worldwide like telcos, utilities, technology providers, shipping providers, and several others that don’t have (or make accessible) the APIs or other electronic means to support the payment and procurement automation their end customers want. With a few extra minutes invested, these customers can now use our PCI Compliant, website automation solution to automate these processes securely on the same customer-facing websites they’re using to do this work manually today. PCI DSS (Payment Card Industry Data Security Standard) is a set of security standards created by the credit card industry to protect payment systems from data breaches. Service providers that process more than 300,000 transactions annually are required to meet the higher PCI Level 1 requirements. Weeldi’s PCI Level 1 Service Provider Certification comes on the heels of achieving its SOC 2 Type II attestation in August 2022. About Weeldi Headquartered in the San Francisco Bay Area Weeldi enables companies of any size or technical ability to stably automate processes on websites, at scale, through its web service API or user interface — with no coding required. SAN FRANCISCO, CA — August 29, 2022: Weeldi, the easiest way to automate any task you do on a website w/ no coding required, announced that it has successfully completed the System and Organization Controls (SOC) 2 Type 2 audit in accordance with attestation standards established by the American Institute of Certified Public Accountants (AICPA). Conducted by risk3sixty, a leading professional services firm, the attestation affirms that Weeldi’s information security practices, policies, procedures, and operations meet the SOC 2 Type 2 standards for security, availability, and confidentiality.

As companies use outside vendors to perform activities that are core to their business operations and strategy, there is a need for more trust and transparency into cloud service providers’ operations, processes, and results. Weeldi’s SOC 2 Type 2 report verifies the existence of internal controls which have been designed and implemented to meet the requirements for the security principles set forth in the Trust Services Principles and Criteria for Security. It provides a thorough review of how Weeldi’s internal controls affect the security, availability, and processing integrity of the systems it uses to process customers’ data, and the confidentiality and privacy of the information processed by these systems. This independent validation of security controls is crucial for customers. “Obtaining our SOC 2 Type 2 certification reinforces Weeldi’s ongoing commitment to the security, availability, and processing integrity of Weeldi’s no-code automation platform,” says Mathieu Guilmineau, Co-Founder & CTO of Weeldi. “Our customers can feel confident that we are taking security seriously at Weeldi.” In addition to a SOC 2 Type 2 attestation, Weeldi continues to make enhancements to its infrastructure by adding additional layers of redundancy and increasing monitoring coverage of its platform, while ensuring it remains the easiest and most stable way to automate any process customers do on a website. About Weeldi Headquartered in the San Francisco Bay Area Weeldi enables companies of any size or technical ability to stably automate processes on websites, at scale, through its web service API or user interface — with no coding required. 10 obstacles you need to consider when automating past MFA. Automating repetitive tasks on the web can save your business time and money while enabling your human capital to focus on more strategic tasks. However, as websites beef up their security posture by implementing Multi-Factor Authentication (MFA) this security feature can be a hindrance to your automation objectives. If you’re automating behind logins with MFA, you’ll need to ensure you have solutions for the following 10 obstacles. 1. Configuration You’ll need a place for your One-Time Passcodes (OTP) to go, so you’ll have to set up an email inbox to capture OTP email forwards. Further, if you’ll be forwarding these emails to an outside domain you’ll have to make sure your email system is configured to accommodate forwarding from one outside domain to another outside domain. 2. Spam Filters If you’re not receiving OTP codes you’ll need to check your spam filter. Email spam filters may automatically block emails from the websites sending you OTP codes. If this is the case you’ll have to adjust your email system spam filter accordingly. 3. Non-Email MFA (SMS or Authenticator Apps) To further bolster security, more-and-more websites are moving away from email and require SMS or Authenticator Apps (e.g. Auth, Duo, Google Authenticator, etc) for MFA. You’ll need a solution that can capture and resolve these methods of MFA in an automated fashion. 4. Identifying OTP Codes For security purposes, OTP codes are generally sent without referencing a specific login. This can make it difficult to correlate OTP codes with their associated logins while you’re running concurrent automations. You’ll need a solution to ensure you can properly correlate OTP codes with their respective logins. 5. OTP Delays and Failures Due to various system issues, OTP codes don’t always arrive in the same order as you requested them, or in some instances, they don't at all. This means you’ll not only need a solution to properly correlate OTP codes with their respective logins but also automatically reattempt the OTP process if the correct passcode cannot be identified or never arrived. 6. Inbox Noise Often the same email address that receives OTP codes will also receive various other automated emails such as confirmations, notices and marketing messages, and/or other manual email communication. You’ll need a solution to sift through this email noise and hone in on the correct OTP codes. 7. Email Purging Data security is critical and since the same email addresses that receive OTP codes will likely receive various other emails, which may contain sensitive data and high volumes of data, it is critical that you are permanently purging your OTP inbox frequently to avoid accidental data leaks or a full email inbox. 8. Scaling Automated OTP Resolution As your automation volume grows, you will likely run into email throttling by your email provider and increased difficulty in associating OTPs to the proper login. You’ll need a solution to ensure high automation volume doesn’t break your automated OTP resolution process. 9. OTP Code Extraction from Emails Extracting the correct code from an email also presents its own challenges such as other sequences of numbers throughout the email body, layered multipart email format, base64 content-encoding, or even embedding the code in an image. You’ll need a solution to ensure you can extract the OTP code accurately in these scenarios. 10. Minimizing OTPs Similar to your experience accessing websites manually, OTPs generally don’t present themselves on every login unless they have been configured to do so. However, if your login looks suspicious: like it comes from a bot, or like it comes from an unrecognized browser, you’re likely to be presented with OTP on each login. This can slow down automations and result in more failures. You’ll need a solution to minimize the presence of OTP once you’ve passed it once. Why Weeldi While Multi-Factor Authentication (MFA) is a powerful tool to bolster website security it can become a hindrance to scaling your automation objectives. Attempting to solve MFA challenges with custom code is a perpetual, resource-intensive effort that can distract from your focus on automating. Companies like Weeldi provide an out-of-the-box solution to these 10 MFA obstacles and many others with no costly configuration or coding required. In addition, you pay for the results, not for integrator hours, development hours, and/or per bot hours that may not result in your long-term automation success. SAN FRANCISCO, CA — September 23, 2021: Weeldi, the easiest way to automate any task you do on the web w/ no coding required, announced that it has successfully completed the Service Organization Control (SOC) 2 audit. Conducted by risk3sixty, a leading professional services firm, the audit affirms that Weeldi’s information security practices, policies, procedures, and operations meet the SOC 2 standards for security, availability, and confidentiality.

As companies use outside vendors to perform activities that are core to their business operations and strategy, there is a need for more trust and transparency into cloud service providers’ operations, processes, and results. Weeldi’s SOC 2 report verifies the existence of internal controls which have been designed and implemented to meet the requirements for the security principles set forth in the Trust Services Principles and Criteria for Security. It provides a thorough review of how Weeldi’s internal controls affect the security, availability, and processing integrity of the systems it uses to process customer’s data, and the confidentiality and privacy of the information processed by these systems. This independent validation of security controls is crucial for customers. “Obtaining our SOC 2 certification reinforces Weeldi’s ongoing commitment to the security, availability, and processing integrity of the Weeldi’s no-code automation platform,” says Mathieu Guilmineau, Co-Founder & CTO of Weeldi. “Our customers can feel confident that we are taking security seriously at Weeldi.” In addition to SOC 2 compliance, Weeldi continues to make enhancements to its infrastructure by adding in additional layers of redundancy and increasing monitoring coverage of its platform. A common misconception is automation on the web is unstable, high maintenance, and hard to scale because websites simply change too often — resulting in a constant cat-and-mouse game.

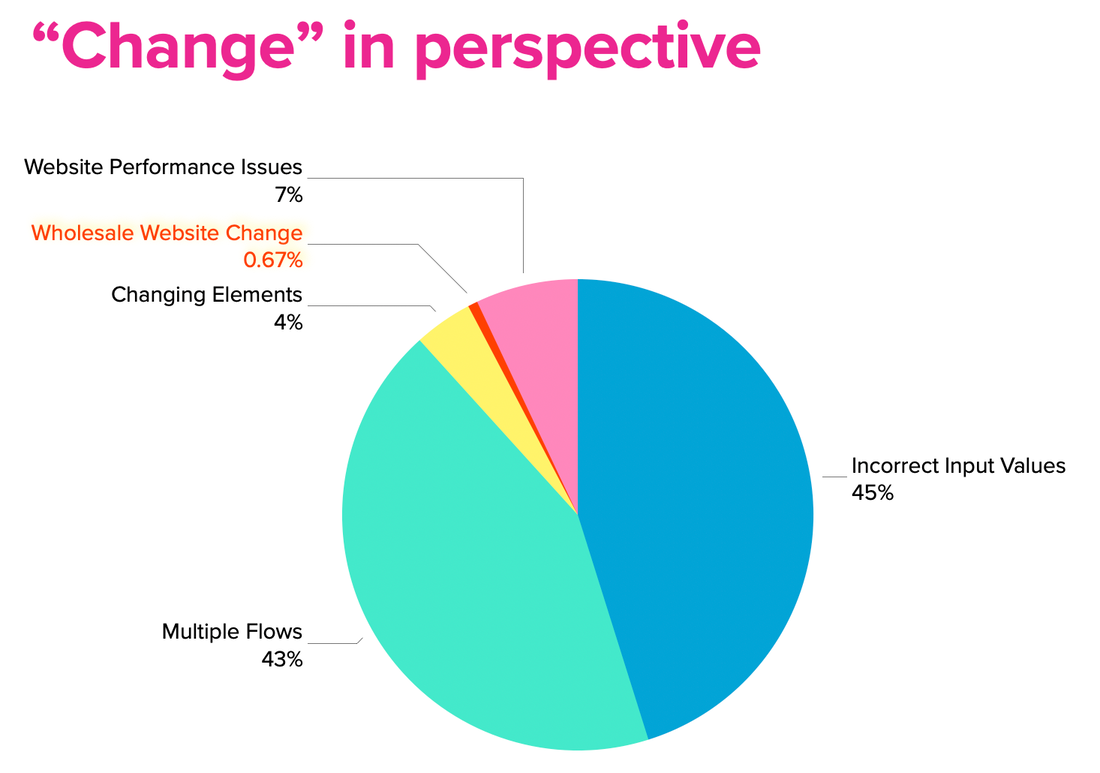

However, after looking at over 1M automations, over multiple months, on Weeldi the numbers tell a different story. 45% of automations fail because the customer input data is wrong. These are issues like invalid log-in credentials, invalid account numbers, incorrect scheduling, incorrect payment information, and attempting to capture data that doesn't exist. Weeldi’s API and User Interface allows users to update this input data on demand and classifies errors by customizable error type with supporting screenshots, so users can quickly (and automatically) identify and fix automations failing due to incorrect input data. Ultimately, if you want automation to succeed you need your input data to be clean and up-to-date. 43% of automations fail because of multiple website versions. This is most commonly believed by users to be a change with a website, however it is instead a new version of the same website, which they have yet to come across while automating. As you scale the Job volume behind each automation you see different scenarios you didn’t see before. This is commonly a result of regional differences, AB testing, or different account types on the same website. Weeldi’s automation engine supports multiple flows per website allowing customers to record multiple automations per website. Then Weeldi uses AI to figure out which automation works per Job and memorizes this for future runs, increasing automation success and removing painful guesswork for users. Simply put, volume strengthens automation. 7% of initial automations fail because of website performance issues. This means the website is down, loading too slowly, not returning a query. Generally, these are temporary issues and occur during website updates or more often on websites pulling data from several legacy systems, as at any given moment one of those systems may be down. (e.g. vendor websites like utilities, telcos, suppliers, etc.) Weeldi enables reattempt scheduling by automation, which means if a website is down, slow, or not returning an expected result, Weeldi will reattempt within a configurable timeframe until the website can complete the automation successfully. 4% of automations (if using RPA) will fail because of changing elements. Websites are built using HTML tags. A common example, a pay bill button may be implemented as element type BUTTON, but upon a new website update, it may have been changed to DIV, SPAN, or INPUT. These are tricky because while nothing may change to the human eye, these tag changes will stop traditional automation tools like RPA dead in their tracks. Since Weeldi automatically solves for these changes (as described below) instead of tracking failures, we tracked how often our automation engine had to solve for these changes. Weeldi’s proprietary approach to automating on the web takes into account a more complete description of the webpage (not just HTML tags) and uses AI to add an abstraction layer above the webpage, allowing the Weeldi automation engine to view web pages like a human. This means if an HTML tag changes, an element moves, or an element is named differently, chances are very high Weeldi will navigate through it ensuring the automation completes successfully. .67% of automations fail because of wholesale website UI changes. Wholesale website UI changes are often assumed to be the primary reason for web automations breaking, however wholesale website UI changes are by far the least common reason why Weeldi automations break. The reality is wholesale UI changes take a lot of time and endanger the user experience, which means they don't happen frequently. Weeldi’s automation engine is equipped with tools that make it clear when automations are failing and why they are failing, including supporting screenshots and error details. This makes it immediately obvious when a complete website UI change has taken place. The next step is adjusting to the new change and this is where Weeldi's No Code Recording Engine (a Chrome Extension that runs in your Google Chrome Browser) allows non-technical users to quickly record a new or updated web automation in just minutes. About Weeldi Headquartered in the San Francisco Bay Area Weeldi enables companies of any size or technical ability to stably automate processes on the web, at scale, through its web service API or user interface — with no coding required. 8 areas where you can leverage AI today for automation on the web.

Artificial Intelligence (AI) is a hot and highly marketed topic, but the application of AI for automation on the web is still in its infancy. This means those laying the right foundation today will be rewarded with the compounding value of less friction and more success with future automations. Here are 8 areas where you can begin leveraging AI for automation on the web. 1) Login Many automations on the web start by getting through a login page. AI can be leveraged to simplify the login step by looking up standard login components such as username, password, and “remember me” functionality, minimizing obstacles like MFA (Multi-Factor Authentication) and CAPTCHAs, and increasing the probability of automation success when there are login page changes. 2) Flow selection A growing number of websites have multiple versions based on region, customer type, M&A (Mergers & Acquisitions), and/or A/B testing. Using AI you can simplify the support of multiple website versions. This can be achieved by recording multiple automation flows, by website version, then using AI to select and navigate to the correct flow. This approach adds scale and stability to the automation by eliminating the need for a human to micro-manage variability of website versions. 3) Managing website issues Traditionally, website instability is a key culprit for breaking automations on the web. AI can help solve the most common instability issues like slow-loading webpages and webpage timeouts. It can do this by intelligently adjusting bot wait times by website, proceeding when critical components of a webpage are available (but noncritical components are not) and automatically reloading webpages with timeouts. 4) Formatting Bot automation flows are guided by data such as dates, account numbers, and SKUs. However, the way this data is presented on websites may vary from how you store it in your database, and even if you harmonize the two, eventual changes to the presentation of data on the web will break your automations. AI can help solve this problem by recognizing data patterns when websites make changes to the way they present data. Common examples are changes in date formats (i.e. 01/17/2021 vs. 17/01/2021), account numbers (i.e. 001234567 vs. 1234567), and SKUs (i.e. TESLA-S vs. Tesla Model S). 5) Nomenclature Change on the web is constant as websites evolve to meet their users' expectations. For example, you may download your utility bill each month by clicking on a link that states “Invoice”, but next month that same link may state “Bill”. These types of seemingly obvious changes to the human eye will break traditional bot automations. By using AI you can better recognize relevant nomenclature changes such as synonyms and increase your probability of success automating on the web. 6) Navigation Website changes go beyond nomenclature changes. Sometimes nomenclature stays the same, but the elements in the User Interface change. A common example is a website modernizing clickable links by transitioning them into buttons. This kind of change will break traditional bot automations. By storing the goal/intent of your automation rather than just the HTML element location and raw browser interactions you can leverage AI to successfully navigate website changes. 7) Pop-ups Websites use pop-ups to gain the attention of their users. Unfortunately, this can break brittle, bot automations. By using AI you can intelligently recognize pop-ups, the content within them and derive whether your automation should fail or close (or acknowledge) the pop-up and proceed with the automation. 8) Scheduling Some Bot automations run on a preset schedule based on an understanding the automation can be executed successfully at that time. But, what if your scheduled date is wrong. A common example is you command your bot automation to download a report on a specific date, but continually the report isn’t available until 2-3 days later. AI can help you solve this problem by learning the optimal schedule based on historical automation success and suggest the appropriate schedule adjustment. Why Weeldi? As companies attempt to automate predictably on the web they must consider the web's dynamic nature and look to pattern recognition technologies like AI to help manage this variability. Companies like Weeldi have started laying the groundwork by designing automations that focus on user intent, rather than exclusively on static navigation steps, and then using AI to successfully navigate to the users expected outcome. This approach proves to be a sturdy alternative for automation on the web where change is constant, broad, and unpredictable. 1) Encryption Sensitive data like passwords and PII (Personally Identifiable Information) required to run automations should be encrypted in transit and at rest with a cloud-based automation vendor. Also, sensitive data like passwords should never be displayed in clear through the application UI. 2) Transparency Access logs should be made easily available to you showing every time-sensitive data is accessed, including username, time of access, and the data accessed. Neither your users nor a cloud-based automation vendor should not have the ability to edit or remove these access logs. 3) 2FA (2-Factor Authentication) 2FA adds another layer of time-sensitive, security to the login process, reducing the chances of your account being hacked. This layer can be an OTP sent to your mobile device or an auto-generated code via a 2FA app. However, it should be noted, as hackers get more sophisticated in their ability to hack into SMS via flaws in SS7 protocols 2FA apps are preferred for optimal security. Cloud-based automation vendors should provide the flexibility to require 2FA at the organization level or individual user level. Commonly used 2FA apps are Twilio Authy or Google Authenticator. 4) KMS (Key Management Service) Integration KMS Integration allows you to integrate with your current KMS system to create and control the encryption keys used to encrypt your sensitive data. It also enables transparency into who is accessing your sensitive data and provides you with full and immediate control over who can continue to access your sensitive data. Commonly used KMS tools include Amazon KMS and HyTrust. 5) Secret Manager Integration Secret Manager integration gives you the ability to use your Secret Manager tool of choice to store sensitive data like passwords, control access to sensitive data, maintain transparency into when sensitive data is accessed and centrally manage sensitive data. Commonly used Secret Manager Tools include Amazon Secrets Manager and Google Secret Manager. 6) SSO (Single Sign-On) SSO integration allows your users to log into a cloud-based automation provider with a single ID and password following the SAML 2.0 protocol, usually centrally managed through an Identity Management provider. Commonly used Identity Management Providers include OKTA and OneLogin. Why Weeldi? As companies automate more tasks requiring sensitive data like passwords or PII (Personally Identifiable Information) they are faced with the challenge of ensuring their automations run securely. Cloud-based automation solutions like Weeldi provide transparency into sensitive data access, best in class security using tools like encryption, 2FA (2-Factor Authentication) and SSO (Single Sign-On), as well as integration with 3rd party KMS (Key Management Services) and Secret Manager tools to ensure your sensitive data is secure and in your control. 5 approaches to automation that are too rigid for the web.

Miles Davis could pick up his trumpet, step into a quartet, listen, adapt and produce beautiful Jazz that worked. Similar to a great Jazz musician, a good automation approach for the web has the flexibility to process dynamic inputs, adapt in near real-time and deliver just the right output. However, most traditional automation approaches are rigidly designed with little ability to adapt to change, making them brittle when deployed on the web. Below are 5 approaches to automation that are too rigid for the web. 1) Using X-Y coordinates to locate objects Similar to longitude and latitude on a map, this approach relies on the objects on the screen to be predictably in the same location time and again, but the web is innately dynamic and objects move or could be presented differently based on the browser, device type and resolution. Advertisements, message notifications, report filter options and UI design modifications are examples of changes that will break any automation that relies on X-Y coordinates. 2) Extracting the DOM structure and looking for elements by name or structure The DOM is a tree structure of all the elements that make up a web page. Traditional automations extract the DOM structure and look for elements precisely by their id, class or position. This approach is prone to instability as many websites eschew naming DOM elements entirely, arbitrarily change ids and class names or duplicate ids in violation of DOM specifications, causing automations to break. Also, web development trends are moving toward websites that load dynamically as you scroll through the webpage, defer loading portions of a web page, or dynamically build portions of the page after it is loaded, to provide a more seamless experience to customers with lower bandwidth. While this improves the user experience, it confuses and breaks automations that depend on a static, predictable DOM structure. Finally, most DOM violations aren’t a stumbling block for the user experience because web browsers like Chrome and Firefox do a fantastic job of masking poor adherence to web standards. But, these DOM violations will break automations and require arduous collaboration from operations and software development to investigate and resolve. 3) Javascript containers (e.g. React, Angular, etc.) Javascript frameworks help software developers build consistent user experiences, however, they also hijack user interactions like mouse clicks and scrolling, which can render automations powerless, causing them to fail. 4) Using shortcuts to bypass the User Experience To increase the speed of automations, some software developers decide to bypass the website user experience by taking shortcuts to complete automations faster. Taking these shortcuts will inevitably lead to issues like slowing down (or crashing) your target website, IP Blocking, getting auto-logged out and/or accessing incomplete data. Short-cuts appear to be great until they don’t work and they then become very difficult to investigate and repair as they were not based on user experience to begin with. 5) Using web testing automation tools (e.g. Selenium, etc.) To accelerate automation, rather than start from scratch, software developers may choose to utilize web testing automation tools like Selenium. However, these tools have a propensity to get jammed-up by rogue javascript libraries, such as the ones often embedded in website analytics tools. So when you’re automating on the web you must ditch the traditional rigid approach for an adaptive modern approach that produces beautiful automations that work. Why Weeldi? As companies attempt to automate stably on the web they must consider its dynamic nature. Weeldi’s modern approach to automation is flexible and adaptive, enabling companies of any size or technical ability to stably automate processes on the web, at scale, through its web service API or user interface — with no coding required. 6 + (1) tips for deploying your automations in the cloud at scale.

Automation improves how your business scales. But, how do you ensure your automations can scale? 1) Scale-Up Your automations may vary by volume or include tight time constraints. To ensure on-time, successful delivery of automations, you must be able to predict server capacity for peak usage and have the flexibility to deploy additional server capacity in real-time to guarantee timely execution. 2) Scheduling You must integrate the scheduling and prioritization components of your automation engine with your servers to provision adequate capacity to complete your automations on time. The scheduling and prioritization components to consider include: urgency, probability of success, concurrent login acceptance (if automating behind logins), the number of reattempts required for automations to complete successfully, amongst several others. 3) Scale-Down / Reduce Costs To run your automations economically, you must have the ability to reallocate server resources in real-time for automations that require them, or turn down servers during periods of lower automation activity. 4) Configuration Flexibility Not all websites are optimized for all browsing environments. One website may be friendlier on Chrome and another on Firefox. Further complicating matters, the process you’re trying to automate (i.e. downloading a file vs. submitting a web form) may have different probabilities of success depending on the configuration. By having multiple browsing configurations deployed in the cloud you can systematically A+B test your automations to identify configurations with the highest probability of success and use those configurations moving forward. Common configuration variables include: using Proxy IPs, using older browser versions, turning off 3rd party tracking amongst several others. 5) Security To protect your data, it's important to disconnect data storage from the actual processing of the automation. This enables you to secure the automation independently of the storage of the automation result, ensuring that anything bad your automation might pick up on the web does not contaminate your stored data. 6) Monitoring On the web, many items can degrade or even crash your automations' performance. To run stable and timely automations, it a requirement to have real-time visibility and monitoring of server health and usage 6*) Target Website Acceptance To ensure your automation is accepted by the target website, you must have IP flexibility including provisioning dedicated IPs, untainted IPs, a range of customer IPs or in some instances leveraging Proxy IPs. Why Weeldi? As companies increase the volume of tasks they automate, they are faced with the infrastructure challenges of ensuring their automations run successfully, on time and at scale. Cloud-based automation solutions like Weeldi provide out-of-the-box functionality that enables you to automatically scale to meet your workload. 10 obstacles you need to consider when using Robotic Process Automation (RPA) bots for unattended automations on the public internet.

RPA on the public web RPA bots are powerful tools, however if you are attempting to use RPA bots for unattended automations on the public web you’ll need to ensure you have solutions for the following 10 obstacles. 1. Log-Ins The processes you want to automate may be on the Deep Web (behind login credentials). To automate processes behind logins you’ll need to make sure your RPA bots can securely access the credentials necessary to complete your unattended automations. 2. Two-Factor Authentication Websites may require two-factor authentication when logging in from a new or unrecognized web browser. In addition, this step can be triggered at the discretion of a website at any time. You’ll need a solution to avoid and resolve two-factor authentication. 3. Captchas Websites may require you to solve captchas before granting access. You’ll need a solution to solve or avoid captchas. 4. IP Blacklisting Websites may blacklist critical IP addresses if you are accessing them too fast, too frequently or concurrently. You’ll need a solution to ensure you do not trigger IP blacklisting. 5. Scheduling You’ll need a flexible solution to schedule frequency of RPA bot attempts and reattempts (for failed) unattended automations. 6. Schedule-and-Return Some scenarios will require you to first initiate your automation and then return within a defined time frame to complete it. You’ll need a flexible solution to manage schedule-and- return automations. 7. A/B Testing Websites can have multiple versions of their site depending on region, customer type and/ or A/B testing. To adequately support this common scenario you will have to build and support multiple versions of your bot. 8. Intermittent Downtime, Time-Outs and Latency Websites on the public internet can be unstable so your RPA bots will need to be equipped to manage instability to ensure you can complete your unattended automations successfully. This means not aborting unattended automations too early and automatically reattempting others at a later time. In some instances, it may take several attempts over multiple hours before an automation completes successfully on a public website. You’ll need a solution to manage this website instability carefully. 9. New Front End Frameworks and Elements As the web evolves so do the frontend frameworks and elements. Your RPA bots should be able run in frameworks such as AngularJS and ReactJS and elements such as modals and hidden fields. In addition, your RPA bots should evolve to account for new frameworks and web elements as they are introduced. In the world of RPA bots this may mean building a new bot. 10. Rogue Scripts, Insecure Connections and Mixed Content Some websites include rogue scripts, which will trigger timeouts and others insecure connections or content, which result in modern browsers rejecting all or part of the page. You’ll need a solution to manage through these obstacles. Why Weeldi While RPA bots can be powerful tools, they often require costly, intricate configuration and even custom development to deliver an enterprise-ready solution for automating process on the public internet. Alternatively, considering purpose-built solutions for automating process on the public internet could save you time and money. As an example, companies like Weeldi provide solutions to these 10 obstacles and many others, out-of-the-box, with no costly configuration or coding required. In addition, you pay by the results, not per bot hour or the individual bot. How businesses are moving from risky home grown “scraping” solutions into secure, comprehensive Web Data Integration (WDI)

The current state of web data Web data is the boundless unstructured data living on websites, web portals and SaaS applications–simply put, it’s the data in your web browser. For years, web data has been something you can see, but not really “touch” or incorporate into your business systems without accessing each website via an API or building homegrown scraping solutions. But that’s about to change. Finding the fuel to move forward Often, the data a business needs to fuel success can be accessed by APIs, but not always, as there are countless non-API sources of information on websites, web portals, and even some SaaS applications. Take for example many Mobile Telecom Companies who offer APIs to customers to help manage expenses, but leave out critical pieces of data, like current international usage, which is invaluable for avoiding costly roaming charges. This usage data may not be in the API, but is instead sitting as unstructured web data, trapped in a customer web portal...ripe for consumption if it could only be easily accessed at scale. Moving past relying (solely) on APIs While APIs are a standard means of data transfer, that doesn’t necessarily make them the most expedient or ideal. Some API access processes can take weeks, months, or longer to approve and set-up, and these delays raise risks of failed deployments, missed revenue opportunities, and poor customer service. But assume API setup goes smoothly: what if your API vendor sunsets support, or decides to make significant changes that make it more difficult to work with their API? The ability to independently access web data moves from being a “nice to have” to a must, as does the need for a modern Web Data Integration (WDI) tool that can extract web data across multiple websites with the same scalability, reliability and structure of an API. According to 2019 research from Opimas, spending on web data extraction was $2.5B in 2017 and predicted to reach nearly $7B by 2020, with the majority of spend on internal homegrown solutions. But while the overall web data extraction spend is trending up, Opimas expects a significant transition from internal spending (with expected growth at 30%) to external spending (with expected growth at a whopping 70%). (1) So why the move toward more external solutions? In their current state, internal solutions for web data extraction are often unstable and send valuable software development resources down a proverbial rabbit hole of custom coding and neverending maintenance in a attempt to manage: data extraction templates for every data source, IP blacklisting, CAPTCHA, 2 factor authentication, pacing, data access across multiple pages / websites or data behind logins, file downloads and scheduling — to name just a few. Signs are pointing toward use of modern Web Data Integration (WDI) solutions that quickly transform any unstructured web data into structured APIs — with no coding required. Specifically, faster, more reliable, more scalable, and more affordable alternatives to legacy homegrown and outsourced web data extraction solutions. How modern Web Data Integration (WDI) differs from “scraping” Modern Web Data Integration (WDI) tools are built to deliver scalable, reliable web data extraction out of the box without requiring software development for either configuration or maintenance. Whereas scraping is a disjointed process of collecting web data that requires software developers to build and reactively maintain custom scraping routines, typically built with a hodgepodge of open source tools. A modern Web Data Integration (WDI) tool gives you “out of the box” ability to:

Scraping requires hourly, salary and/or contract specialist in product management, software development and QA to build and maintain the same functionality that is available out of the box with Web Data Integration (WDI) tools as listed above. Plus you to take on the risk of:

To DIY, or Not DIY? SMB and enterprise adoption of modern Web Data Integration (WDI) tools is on the rise. While there are many legacy, home-grown, outsourced and on-premise solutions still deployed to solve the “web data extraction needs” of competitive businesses, most do not deliver the modern ease of use, reliability and scalability companies need to make web data an integral part of their business. Consider this: just as Stripe has done for online payments and Twilio for business communication, modern Web Data Integration (WDI) tools are now doing to empower organizations in all markets and of all sizes to capture and act on the endless ocean of web data. REFERENCES 1: A. Griem, & O. Marenzi, Web Data Integration — Leveraging the Ultimate Dataset [White paper], retrieved March 2019, Opimas Research |

WeeldiTransform a website into an API in seconds w/ no coding required. Archives

June 2024

Categories |